Beyond Chatbots: Why Deterministic Reasoning Is the Future

For the past few years, the conversation around artificial intelligence in the enterprise has been dominated by the dazzling capabilities of large language models (LLMs). We’ve seen them generate creative text, summarize complex documents, and power chatbots that can converse with startling fluency. This was the era of probabilistic AI, and its promise of human-like reasoning seemed boundless.

But as 2025 drew to a close, a quiet but significant shift occurred. The initial euphoria of impressive demos gave way to the sobering reality of enterprise deployment, and the industry began to understand a critical lesson: in a business environment, sounding human is not the same as being reliable.

The most forward-thinking leaders are no longer asking what AI can do in a sandbox. They are asking what it can be trusted to do at scale.

The answer has led to an architectural reckoning—a move away from the unpredictable nature of pure probabilistic guesswork and toward a future built on a foundation of deterministic reasoning.

The Year Autonomy Hit a Wall

The story of enterprise AI in 2025 was not one of model failure, but of system failure. The promise of fully autonomous agents that could reason and act independently collided with the messy reality of complex enterprise systems. As one post-mortem analysis put it, AI didn’t fail because the models weren’t smart enough; it failed because the systems they were dropped into weren’t legible enough [1].

Autonomous agents exposed years of hidden “metadata debt” inside corporate platforms. When an AI agent couldn’t definitively understand why a user had certain permissions, which validation rule should take precedence, or what downstream processes depended on a specific field, it couldn’t be trusted to act.

In the world of enterprise systems, “almost always” correct is just another way of saying 100% broken. The result was a stall. AI pilots that looked brilliant in isolation failed to cross the “last mile” to production because their probabilistic nature made them an unacceptable liability.

The Architectural Correction: Determinism as a Foundation

In response to this reality, the market leaders began to change their tune. Salesforce, a major proponent of agentic AI, acknowledged the challenge and shifted its narrative away from pure probabilistic execution. As they noted in late 2025, “You can’t cross your fingers that your AI behaves correctly. You need to know it will, every single time” [2].

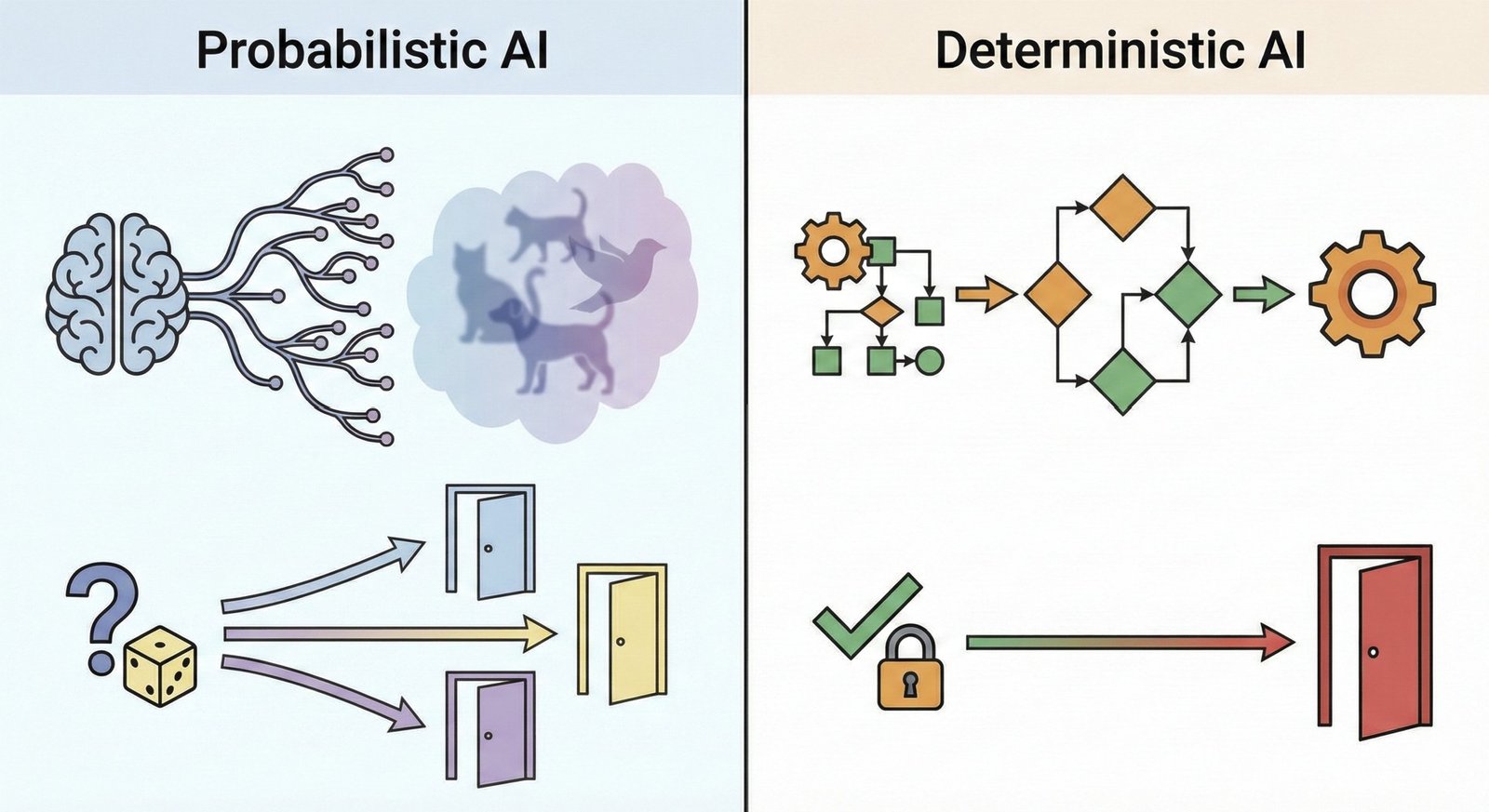

This led to a crucial architectural correction across the industry: the rise of the hybrid model. This approach marries the flexibility of LLMs with the precision and reliability of deterministic frameworks. It recognizes that determinism and AI are not competing philosophies; they are essential partners.

| Feature | Probabilistic AI (LLMs) | Deterministic AI (Rules-Based) |

|---|---|---|

| Core Function | Generates statistically likely outcomes | Executes predefined, logical rules |

| Best Use Case | Brainstorming, summarization, intent recognition | Compliance, calculations, routing, billing |

| Hallucination Risk | High | Non-existent |

| Auditability | Low (Opaque “black box”) | High (Transparent “glass box”) |

| Predictability | Variable | 100% repeatable |

Neurosymbolic AI: The Best of Both Worlds

The next evolution of this hybrid architecture is already taking shape, and it’s known as neurosymbolic AI. This approach formally combines the pattern-recognition strengths of neural networks (the “neuro” part) with the explicit rules and logic of symbolic reasoning (the “symbolic” part). It’s a system designed to reason and act, but with the ability to prove that its actions are correct.

Amazon Web Services (AWS) is at the forefront of productizing this concept with its Automated Reasoning Checks. This feature uses mathematical proofs to validate an AI’s output against a set of ground-truth policies. It can, for instance, prove that a financial report flagged for review contains an unapproved payment by breaking the query down into a formal logic string and verifying it against the system’s rules [3].

This is a monumental leap beyond simply hoping an AI is correct. It provides a mathematically verifiable guarantee of accuracy, effectively eliminating the risk of hallucination for critical tasks.

The Studio AM Takeaway: Building on Trust

The lesson of 2025 is that the future of enterprise automation will not be built on pure probabilistic guesswork. The risk is too high, the outcomes too unpredictable, and the lack of auditability is a non-starter for any regulated industry.

References

[1] Sweep.io. (2025, December 31). 2025: The Year AI Hit A Wall. https://www.sweep.io/blog/2025-the-year-enterprise-ai-hit-the-system-wall/

[2] Salesforce. (2025, December 27). In 2025, AI Grew Up — and Learned to Play by the Rules. https://www.salesforce.com/news/stories/ai-learned-to-play-by-rules/

[3] VentureBeat. (2025, August 6). For regulated industries, AWS’s neurosymbolic AI promises safe, explainable agent automation. https://venturebeat.com/ai/for-regulated-industries-awss-neurosymbolic-ai-promises-safe-explainable-agent-automation