The $100 Billion 'Stack Gap': Understanding Why Banking AI Pilots Struggle to Scale

It is a frustratingly familiar scene: your bank’s AI pilot was a success, the team hit every milestone, and the initial results were promising. Yet, the moment you tried to scale, the momentum evaporated—the project stalled, resources were diverted, and a once-heralded initiative quietly disappeared.

This isn't just a streak of bad luck or a failure of talent. It is a systemic crisis spreading across the financial services industry.

The Reality of the "Stack Gap"

Despite a $31 billion investment in AI in 2024 [1], research from MIT's NANDA initiative shows that 95% of enterprise GenAI pilots fail to deliver any measurable business impact [2]. This failure happens because of a fundamental structural mismatch known as the "stack gap".

The industry is currently facing a $100 billion problem caused by trying to layer modern, real-time AI onto infrastructure that was never designed for it.

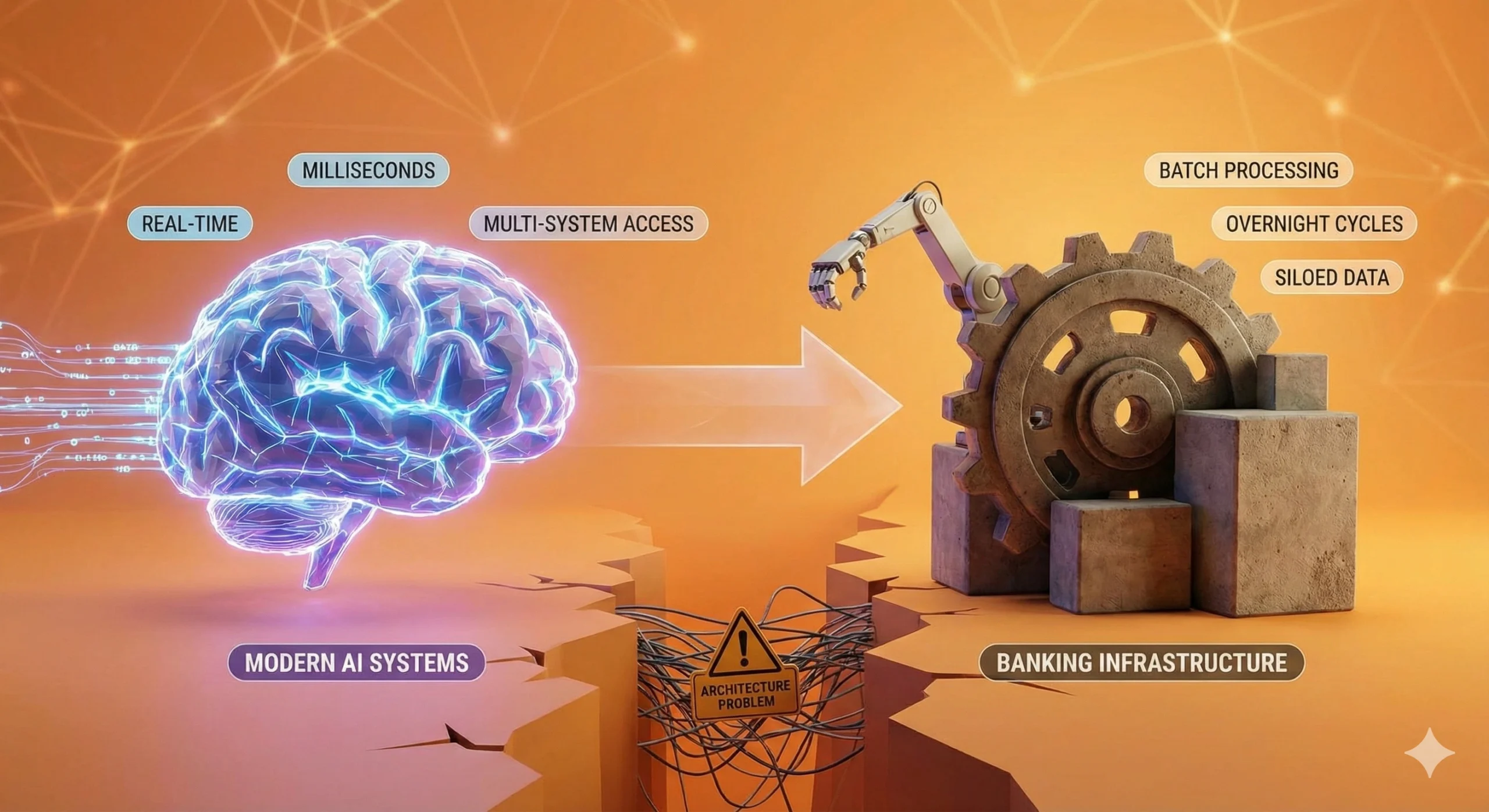

The Architecture Problem

Modern AI systems, particularly those handling real-time decision-making in financial services, have specific infrastructure requirements. They need to process data in milliseconds. They need access to current information across multiple systems simultaneously. They need to operate within strict compliance and governance frameworks. They need to be auditable and transparent in their decision-making.

Most banking infrastructure, by contrast, was architected for different purposes. Core banking systems were built for batch processing—running overnight cycles, processing transactions in defined windows. Customer data lives in multiple repositories, each optimized for specific functions but not designed to communicate seamlessly with others. Integration between systems typically happens through custom point-to-point connections, each requiring manual maintenance and oversight.

When you attempt to layer a real-time, autonomous AI system onto this foundation, the constraints become apparent. The infrastructure simply wasn't designed for this use case. This isn't a failure of planning or execution. It's a recognition that the underlying architecture has different assumptions built into it.

Where the Real Costs Emerge

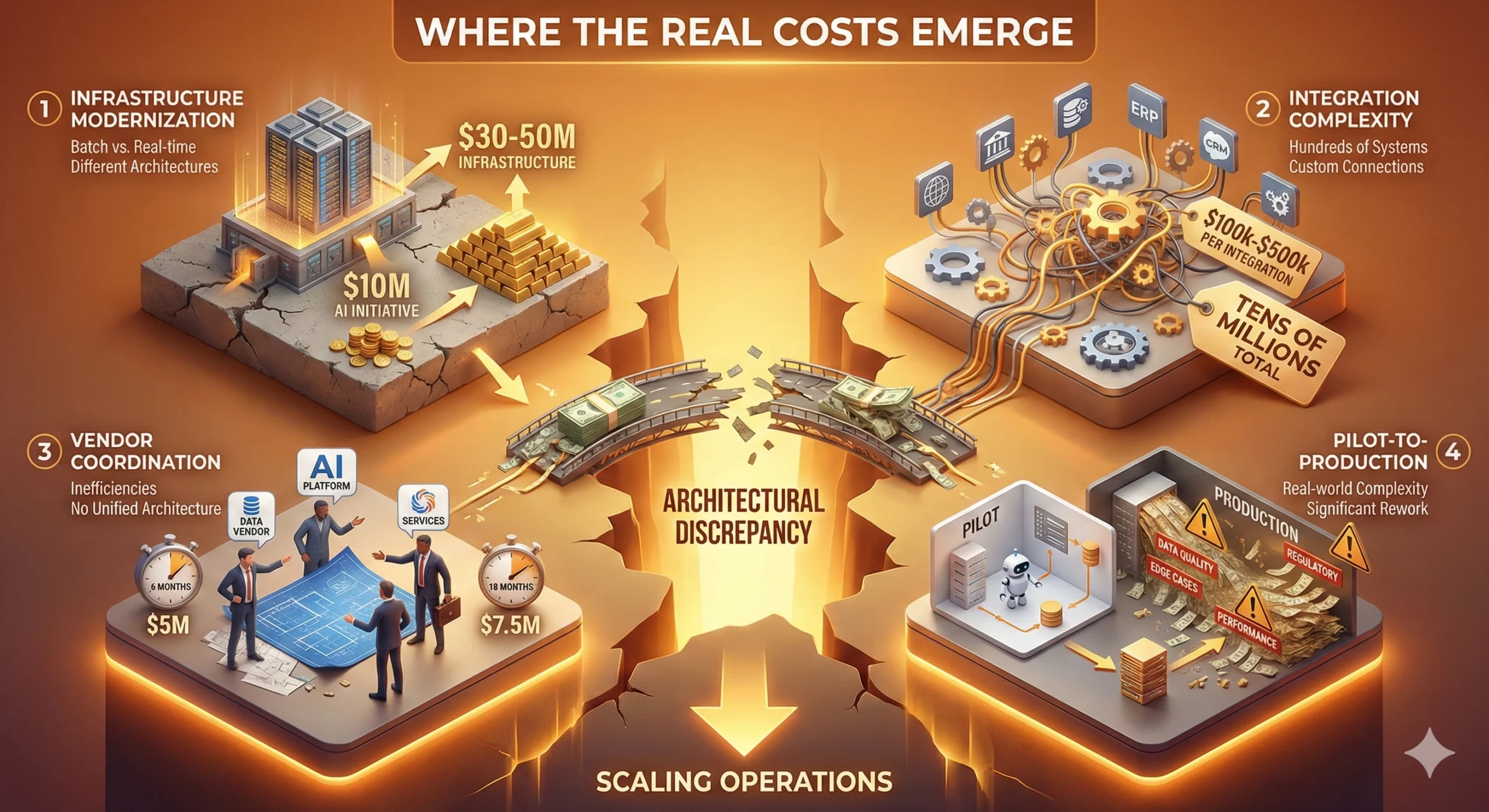

This architectural discrepancy leads to tangible, often unexpected expenses that surface when organizations attempt to scale their operations. We have identified the following key costs:

| Cost Driver | The Reality | Financial Impact |

|---|---|---|

| Infrastructure Modernization | Moving from batch to real-time decision-making requires different database and network architectures. | 3x to 5x Multiplier A $10 million AI initiative often requires $30-50 million in infrastructure investment to actually work at scale. [3] |

| Integration Complexity | A global bank operates hundreds of systems requiring custom feeds. | High Integration Costs Each integration point costs $100,000 to $500,000 to build and maintain properly. [3] |

| Vendor Coordination | Multiple vendors (data, platform, services) without unified architecture create friction. | 50% Cost Overrun Projects take 18 months instead of 6. Costs of $5 million become $7.5 million. [3] |

| Pilot-to-Production | Pilots use simplified data. Production faces data quality issues and edge cases. | Significant Rework Many organizations find that their pilot architecture doesn't scale to production requirements, necessitating significant rework. [3] |

The Regulatory Layer

The transition from an experimental pilot to a live banking environment is where theoretical success meets regulatory reality. For most institutions, this is the point of terminal failure. The "Stack Gap" is not merely a technical deficit in processing power or data speed; it is an architectural inability to meet the increasingly rigid standards of algorithmic accountability.

The Liability of the Black Box

In a controlled pilot, "black box" outcomes are often tolerated as long as the predictive accuracy is high. In production, that lack of transparency becomes a structural liability. The Colorado AI Act, effective February 1, 2026 [5], codifies this shift by requiring a "duty of care" to protect consumers from algorithmic discrimination. For a bank, this necessitates a real-time audit trail of every automated decision.

If the underlying infrastructure cannot expose the specific data lineage used by an AI model—often impossible in fragmented legacy environments—the system is, by definition, non-compliant. The project stalls not because the AI failed, but because the infrastructure could not provide the evidentiary proof required to deploy it.

From Guidance to Governance

While industry standards like the 2026 FINRA guidance [4] are often dismissed as non-binding suggestions, in practice, they serve as the benchmark for "reasonable supervision." FINRA’s focus on the integrity and reliability of supervisory systems means that an AI tool is only as viable as the oversight mechanism surrounding it.

The logical disconnect is clear: financial institutions are attempting to deploy 21st-century intelligence on top of 20th-century record-keeping systems. When FINRA or state regulators ask for a demonstration of "rigorous supervisory control," a bank relying on batch-processed data and siloed archives cannot provide it.

The Cost of Verification

This creates a secondary "Stack Gap"—the cost of verification. Meeting these standards often requires a total overhaul of how data is tagged, stored, and retrieved. When a pilot that cost $2 million to build requires $20 million in "governance wrapping" just to satisfy the compliance department, the economics of the initiative collapse.

For the C-suite, the takeaway is stark: compliance is no longer a post-production checklist. It is a baseline architectural requirement. Banks that fail to integrate auditability into their core stack will find their AI ambitions perpetually stuck in the pilot phase.

Moving Forward

The $100 billion "stack gap" is not a post-mortem for banking AI. Rather, it represents the market’s realization of the true cost of entry. The industry has spent the last two years proving that the math works in a vacuum; it must now face the reality of integrating that math into a rigid, legacy-bound ecosystem.

References

[1] NTT DATA. (2025, September 26). The $100 billion stack gap: Why banking's agentic AI dreams will become infrastructure nightmares. Retrieved from https://us.nttdata.com/en/blog/2025/september/the-100-billion-stack-gap

[2] MIT NANDA Initiative. (2025). Enterprise GenAI Pilot Study. As cited in NTT DATA (2025, September 26).

[3] NTT DATA. (2025, September 26). The $100 billion stack gap: Why banking's agentic AI dreams will become infrastructure nightmares. Retrieved from https://us.nttdata.com/en/blog/2025/september/the-100-billion-stack-gap

[4] FINRA. (2025, December). 2026 FINRA Annual Regulatory Oversight Report: GenAI: Continuing and Emerging Trends. Retrieved from https://www.finra.org/rules-guidance/guidance/reports/2026-finra-annual-regulatory-oversight-report/gen-ai

[5] Skadden, Arps, Slate, Meagher & Flom LLP. (2024, June 24). Colorado's Landmark AI Act: What Companies Need To Know. Retrieved from https://www.skadden.com/insights/publications/2024/06/colorados-landmark-ai-act